When your app doesn’t perform as expected, or you’re testing new features, the best way to gauge the users’ reactions is to ask them directly. User testing has long been a part of our development process, and is a vital step to ensure our assumptions and hypotheses are correct. If they aren’t, we gain actionable feedback to eliminate problems before they happen on launch day.

Determine What to Test

While it can be helpful to simply watch a friend click through the app and ask them what they think of it, it often doesn’t give us enough useful data to resolve potential UX pitfalls. Before recruiting users, it’s crucial to identify key moments to observe during testing. These could be UI components (Is the button visible enough?), orientation markers (Do they know where they are?), multi-step workflows (Did they make it all the way through the checkout?), performance metrics (Did they give up before the page loaded?), new features, or more.

Our approach is to create an interview script composed of 6–10 key objectives. Ideally, these moments in the app are proposed resolutions to customer pain points, or new features designed to improve the users’ experience. The script should lead users naturally to these moments without explicitly telling them what to look for. The goal is to observe the user progress through the solution or feature as organically as possible. If the user knows what to look for, they won’t give you an authentic response.

Let’s use this example: Users weren’t completing a signup form, so your team shortened the form by eliminating some optional fields. Rather than asking your users something like, “Is it easy to sign up for an account?” try to give them a more general directive, and observe how they progress through the form, and encourage them to narrate as they go. “Create an account and go to your dashboard,” for example, doesn’t place any emphasis specifically on the form, but naturally brings users through the form flow.

At the end of your testing session, during an open Q&A discussion, feel free to then ask your tester something like, “Did we ask for too much information during the signup process?” Encourage your testers to elaborate on their experience—we’ve found that they often want to please the testing group by offering compliments (which are nice to hear, *blush*), but your goal is to find out whether or not your proposed solution actually works. Tell your testers to be brutally honest. Steel your ego. You want the truth; you can handle the truth. Your observations combined with an open-ended question will deliver more insight than a coerced response.

Who ya gonna call… to test your app?

Lucky for Fusionbox, our most recent UX testing client has thousands of engaged users from which we selected eight to come to our offices for in-person interviews. However, not every organization is so fortunate. Startups, small companies, or first forays into app development won’t have a pool of willing-and-able folks to recruit. For these instances, we’ve relied on partner agencies like Lookback.io and Hotjar to round up individuals willing to participate in a virtual app-testing session. These services offer the same benefits as one-on-one in-person testing (removal of peer pressure to find things or not find things wrong, or agree with other testers instead of volunteering unique responses), with the added benefit of not requiring them to be in the same physical location. You’ll have two-way video chat, script notes, and screen sharing to accurately mimic an in-person session.

We seek a diverse group of testers for these sessions. Device ecosystem, familiarity with the company and/or app, and demographics, should all be captured from a wide net. Too tiny of a cohort might skew results.

How to Measure Success

In order to create the aforementioned actionable tasks, we need to gauge how successfully our testers performed their scripted tasks, gather notes from the open-ended questions, and look for commonalities among the group.

Scripted Tasks

Most of the time, your testers will complete the requested tasks. Good job. They passed. But how quickly did they complete them? Did they get stuck somewhere? Did they go about it in an unconventional way? Did they need a nudge in the right direction? Your observations of the tester’s interaction with the app, as well as notes from their own narration, can give you more insight into just how usable the feature is. We like to use a school-grading rubric:

- A: The tester accomplished the task quickly through the desired route

- B: The tester accomplished the task, but after some slight hesitation or trial-and-error

- C: The tester accomplished the task, but took much longer than expected, and/or didn’t take the desired route

- D: The tester may not have accomplished the task, or only accomplished it after hints from the interviewer

- F: The tester could not accomplish the task, even with help from the interviewer

This can be very helpful when determining which features need more work, or more specifically, how much more attention should be paid to the UX of each feature. Grade B probably indicates some slight UI change or some microcopy to improve the grade to an A. Grade D likely means your team is headed back to the drawing board for another approach.

Questions & Comments

After completing the scripted tasks (successfully or not), take a few minutes to answer any questions your testers have, and ask for some feedback on both the experience as a whole and the specific feature being tested. While users may have quickly earned an A grade on a task, they may comment that there was something confusing, or that they didn’t like about it. In contrast, a user that earned a lower task grade may have helpful insight as to why they couldn’t complete it. We often find common talking points during this phase of the interview that can help the overall design patterns improve (“I didn’t know I could click on that” or “It would be nice if I could…”, etc.).

Summarize your Q&A with common observations that can be translated into either practical changes to be made, or life-giving affirmations of what went well (we all need a pat on the back sometimes, amirite).

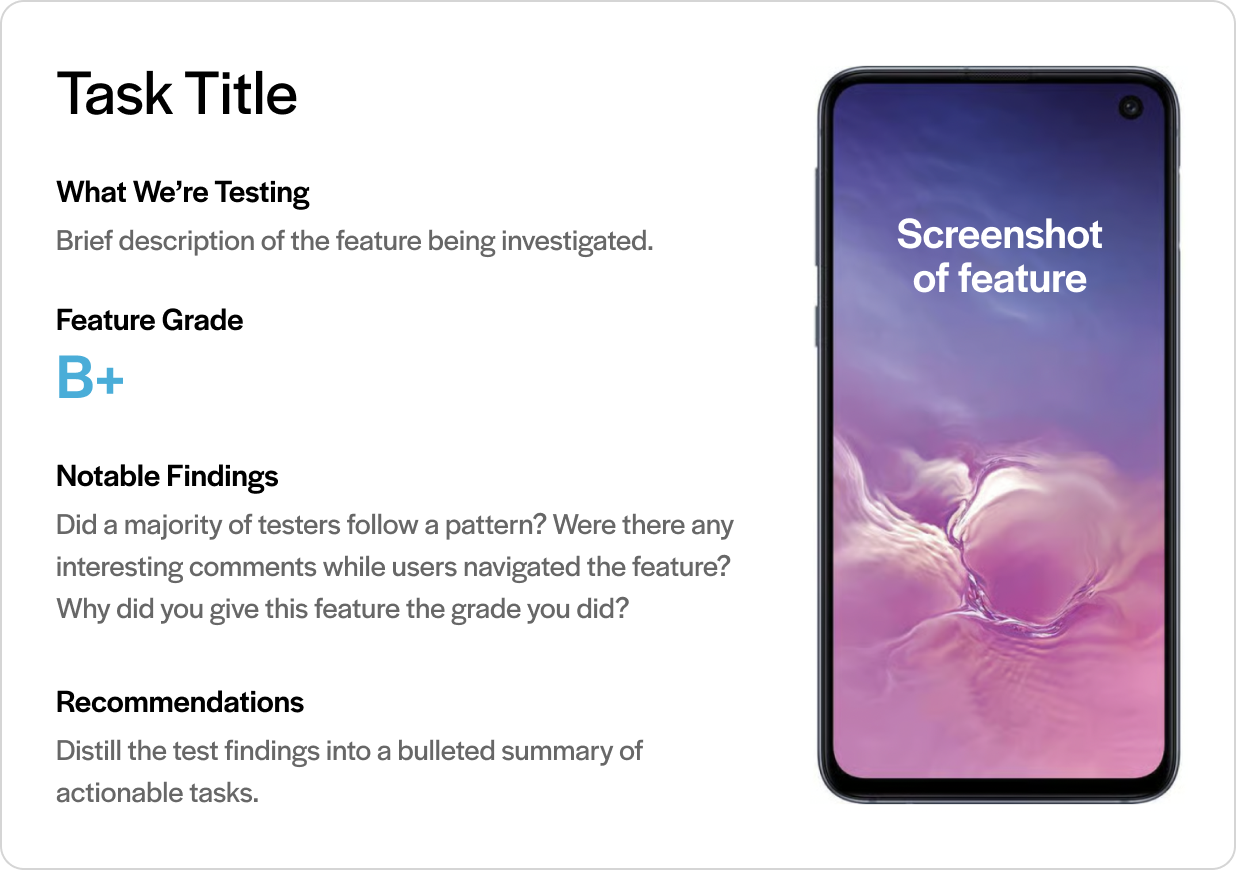

Our final report for each task looks something like this:

That wasn’t on the test.

In a recent interview session, we noted that more than half of our testers tried to tap on something that wasn’t meant to be tappable. We quickly learned that to anticipate users’ needs, we should just go ahead and make it tappable to advance to the logical next step in the scripted task. Result? Happy users. No frustration that “I clicked but nothing happened,” and instead we heard, “Oh, nice, I can click here to change my search.” Voila. We learned something we weren’t even testing for, but only because we were observing real people use the app on a real device. Priceless. Chef’s kiss. Go get ‘em.